App A/B Testing: Google Play Experiments and ASO

May 9th, 2020

by David Quinn

VP of Strategy & Partnerships at Gummicube, Inc.

App A/B Testing is a great tool for developers trying to improve their App Store Optimization. Running A/B tests can identify what users respond to best across an app listing, from the creative designs to the descriptions. In order to properly run these tests, developers need to understand how Google Play Experiments work and the best practices for running A/B tests.

A/B Testing on Google Play

Google Play app developers can run Google Play Experiments on the Play Store to test variants. This A/B testing runs variants against the existing version to see which gains a higher percentage of conversions, so that developers can run the best possible versions. This can be used to test screenshots, videos, icons, featured graphics, short descriptions and long descriptions.

Developers can run default graphic tests for creative elements, or localized experiments for any element in up to five languages. Short and long descriptions can only be tested in localized experiments.

Setting Up Google Play Experiments

To launch an A/B test, you can go to the Google Play Console, click on their app and open the “Store presence” menu on the left. Under that, click on “Store listing experiments.”

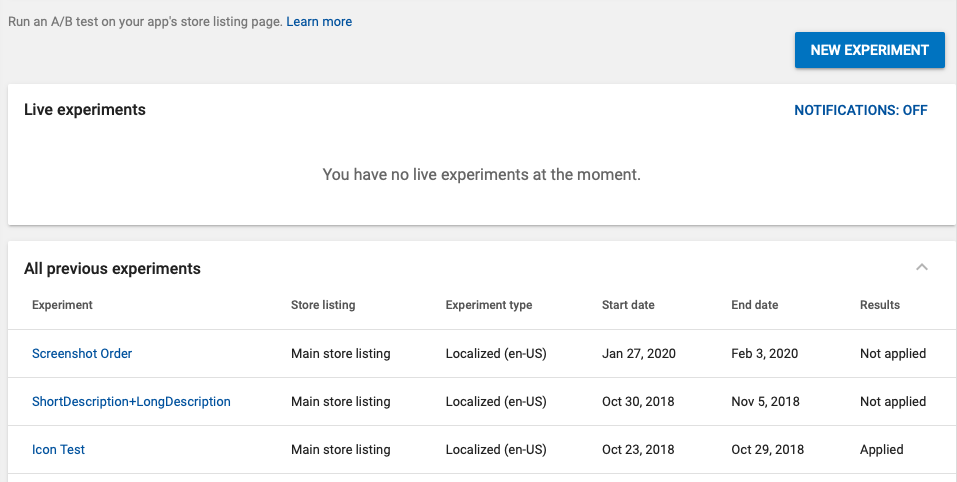

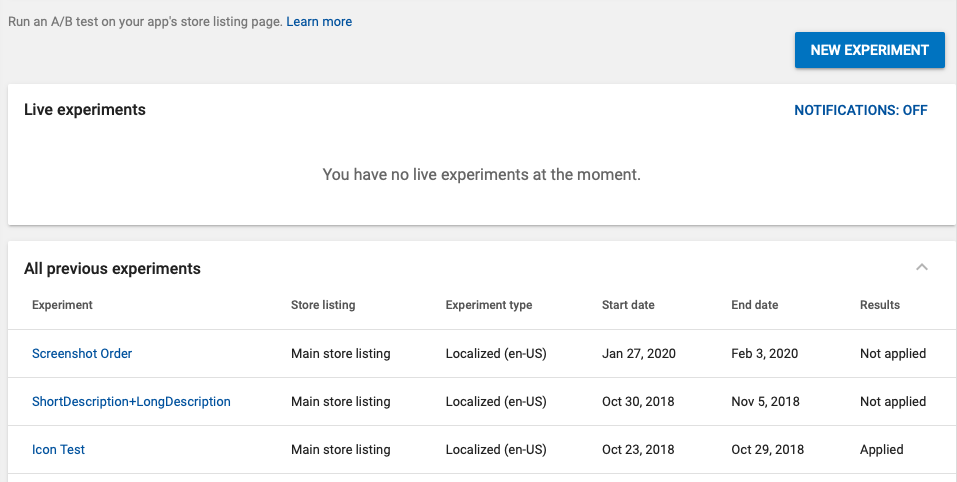

From there, you can see any live experiments and a list of previous experiments. This is also where you can select to launch a new experiment for testing.

When you click “New Experiment” you’re taken to a page where you can add information about the tests you want to run. This includes what store listing to run it on (for apps available in multiple storefronts) what kind of experiment (graphics, description, etc) and how the audience will be split. While you can choose to divide the audience into any percentage, such as a 50-50 or 70-30 split, the control version cannot receive a smaller amount than the variants.

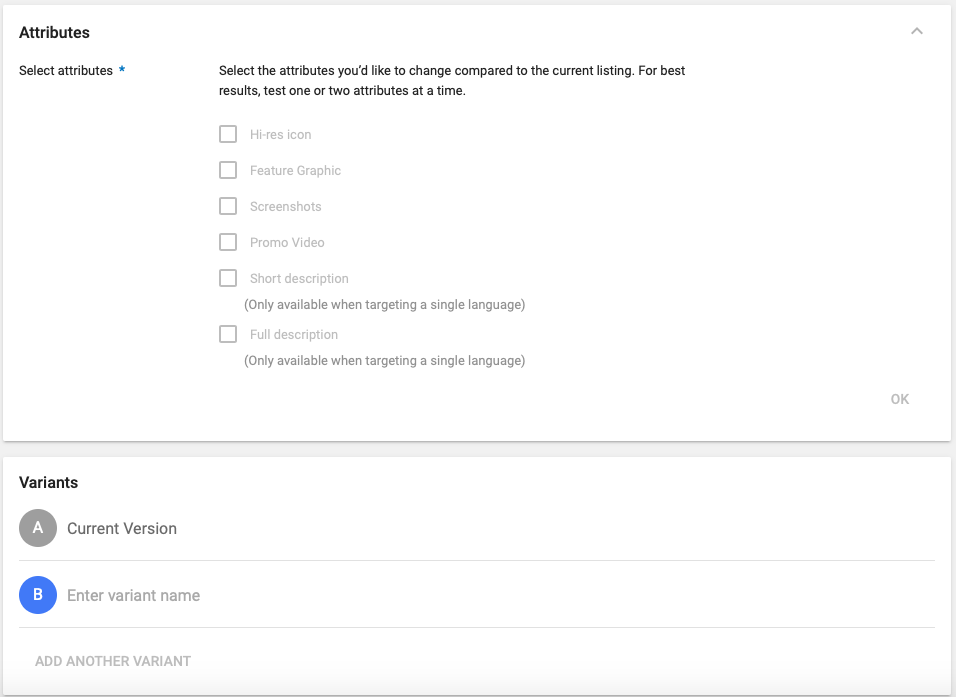

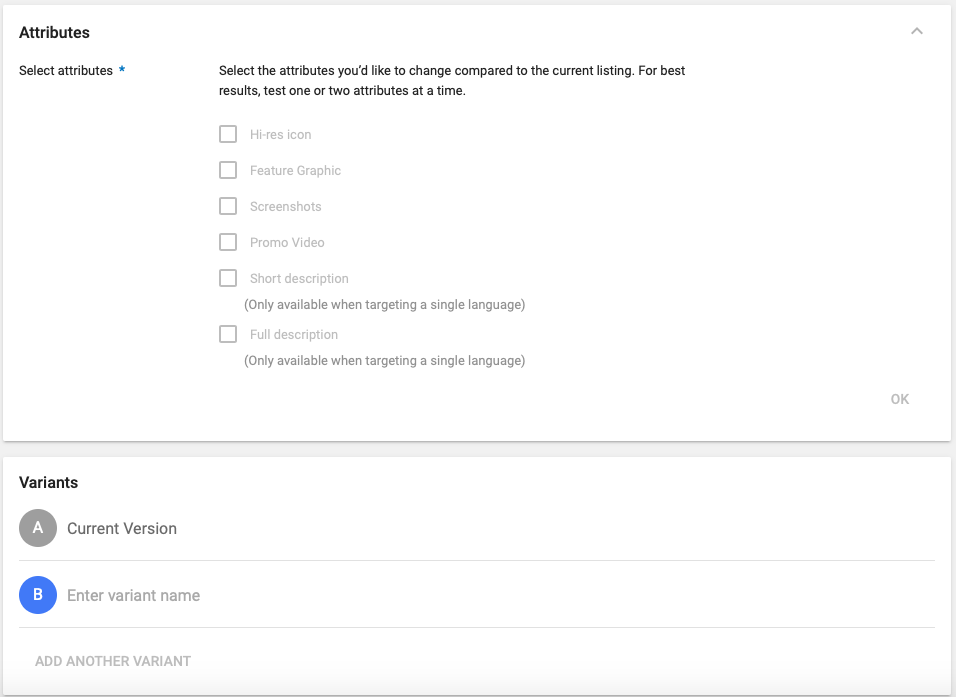

You can select what attributes are changing compared to the existing version, such as the screenshots or descriptions. It is important to note that graphic assets like videos and icons can be tested regardless of language, but description changes can only be tested when targeting a single language.

You can also enter names for the variants. This can help identify specific differences being tested like “Character Set A” or “Feature-focused value proposition.”

Mobile App A/B Test Insights

When you run a Store Listing Experiment, the Google Play Developer Console will present you with data throughout the test. This will track several metrics:

How many new installers you gain during the experiments

What versions and variants are being tested

What percentage of the audience sees which variant

The number of installers each version gained

How many installers each version would have gained if it was run on its own, rather than as part of an experiment

Variant performance compared to the current version

The confidence interval of the test results

The performance is presented in a range with a 90% confidence interval. This means that you can launch it with 90% certainty that it will fall within that performance range. The more the bar leans towards the positive end, the more likely it is that it will outperform the existing version.

Mobile A/B Testing Best Practices

When running tests, developers should keep several best practices in mind for App Store Optimization:

Test all elements, but one at a time. This helps identify what specific aspects perform best, so they can be implemented and improved upon.

Tests should be iterative. Use each test’s successes to improve the next version even further.

Let the tests run long enough to gather enough data. A single day or even a week does not necessarily indicate a trend, but the more traffic volume an app receives, the faster it will gather the data. Focus on the long term.

While you can split the audience in any way, an evenly divided test audience is advised for the clearest results.

Following these best practices can help ensure the tests provide the most accurate data. It will help you clearly identify what specific changes performed well and give you a clear heading for future improvements.

Understand & Run Mobile App A/B Tests

App A/B Testing is an excellent strategy for App Store Optimization. Running tests can provide clear feedback on how well variants perform, enabling developers to optimize with confidence. In order to run experiments properly, developers should understand how experiments work and what the best practices are. This will help you improve every element of the Play Store listing.

Want more information regarding App Store Optimization? Contact Gummicube and we’ll help get your strategy started.

Similar Articles

Posted on October 6th, 2023

Ghostly happenings are among us... and in your app listing too? If you aren't leveraging the power of app seasonality to make relevant tweaks to your store listing you're leaving precious engagement and conversions on the table.

Posted on November 8th, 2021

Developers on the iOS App Store should plan in advance of the upcoming Holiday Schedule to allow enough time for apps to get approved during the busy holidays.

Posted on November 1st, 2021

App Store Optimization is an involved process that should be regularly revisited based on recent changes in trends. Iteration is one of the key drivers for success in ASO.